MapReduce: A Deep Dive into the Distributed Data Processing Frameworks

MapReduce is a highly influential programming model that has transformed the way we process and analyze large-scale data sets.

Introduced by Google in their 2004 paper, “MapReduce: Simplified Data Processing on Large Clusters” by Jeffrey Dean and Sanjay Ghemawat, MapReduce has since inspired numerous data processing systems, including Apache Hadoop. In this technical blog post, we’ll delve deeper into the inner workings of MapReduce, its key components, and how it can be used to solve complex data processing tasks.

How Map + Reduce works

MapReduce is designed to process and generate large data sets in a distributed and parallel manner across multiple nodes or machines. The framework consists of two primary components: the Map function and the Reduce function.

Map function

The Map function takes a set of input data and processes it into intermediate key-value pairs. It is important to note that the input data can be processed independently, allowing parallel execution across multiple nodes. This function can be represented as:

map (k1, v1) -> list(k2, v2)

Here, (k1, v1) represents the input key-value pairs, and (k2, v2) represents the intermediate key-value pairs generated by the Map function.

Reduce function

The Reduce function takes the intermediate key-value pairs produced by the Map function, groups them by key, and processes each group to generate the final output. This function can be represented as:

reduce (k2, list(v2)) -> list(v3)

Here, (k2, list(v2)) represents the grouped intermediate key-value pairs, and list(v3) represents the final output values.

By abstracting the details of distributed computing, fault tolerance, and data partitioning, MapReduce allows developers to focus on implementing the core logic for their specific use case without worrying about the complexities of distributed systems.

Anatomy of a MapReduce Job

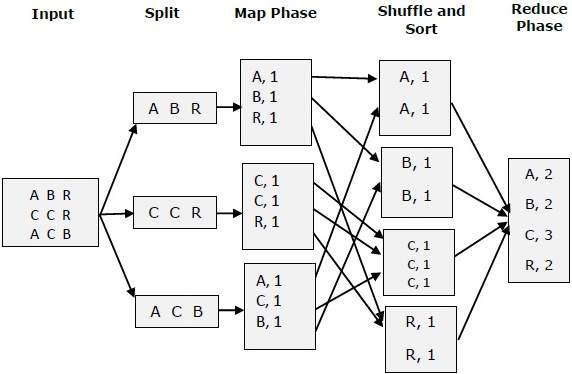

A MapReduce job typically follows these steps:

Input data partitioning: The input data is divided into chunks, which are then distributed across multiple nodes. This partitioning allows the Map function to process the data in parallel.

Map phase: Each node processes its assigned input data chunk independently, generating intermediate key-value pairs.

Shuffle phase: The intermediate key-value pairs are redistributed among the nodes based on their keys. This phase ensures that all key-value pairs with the same key end up on the same node.

Reduce phase: Each node processes its assigned intermediate key-value pairs, applying the Reduce function to generate the final output values.

Output: The final output values are written to storage, often in a distributed file system.

Fault Tolerance and Scalability

One of the key strengths of MapReduce is its ability to handle failures gracefully and scale across thousands of machines. The framework achieves this by:

- Data replication: Input data chunks are replicated across multiple nodes, ensuring that data is not lost due to node failure.

- Task reassignment: If a node fails during the Map or Reduce phase, the framework detects the failure and reassigns the task to another available node.

- Automatic parallelization: MapReduce automatically parallelizes the Map and Reduce functions across multiple nodes, allowing the framework to scale as needed.

Use Cases

MapReduce has been used to solve a wide range of data processing problems, including:

Text processing: Counting word frequencies, searching for specific patterns, and analyzing sentiment in large text corpora.

Log analysis: Processing server logs to identify trends, errors, or security threats.

Web indexing: Generating an inverted index of web pages to facilitate search engine queries.

Graph processing: Analyzing the structure of large graphs, such as social networks or web link graphs.

Machine learning: Training machine learning models on large-scale data sets.

Implementing a MapReduce System with Go

To demonstrate the power of the MapReduce framework and explore its concepts in practice, I’ve built a simple study project using Go. The project includes a coordinator server and worker nodes that communicate using Go’s net/rpc package.

The coordinator server assigns tasks to worker nodes, tracks their status, and manages job completion.

The worker nodes connect to the coordinator, request tasks, process the tasks, and report back once they’re finished.

You can view it at my Github: Simple MapReduce Implementation.

MapReduce is a powerful framework that has revolutionized the way we process large-scale data sets. By providing a simple yet effective programming model, it has made distributed and parallel computing more accessible to developers. If you’re interested in diving deeper into distributed systems and large-scale data processing, exploring MapReduce is an excellent starting point. The Google paper and the Go-based study project are valuable resources for understanding the concepts and putting them into practice.